Breaking AI: writeups of AI CTF tasks at PHDays 10

6/18/2021

We keep elaborating on the topic of AI security and risks and, for PHDays (phdays.com), we put together a track with talks and organized a CTF competition for cybersecurity experts, which addresses the risks of AI. In this article, we will tell you about the competition: what tasks were there and how everything went.

AI CTF has taken place before, and we already published the last description of the format and tasks on Habr.

>

Writeups of tasks

The topics of information security and artificial intelligence are quite popular separately. But when they are combined, adversarial attacks most often spring to mind, although they are not so common in real life. We have tried to highlight other possible risks.

This year's tasks were evaluated dynamically: at the very beginning, all tasks cost the same, and later their cost decreased if they were solved by many players. This saved us from incorrect evaluation of tasks and, in our opinion, made the evaluation fairer.

3v1l_k3yb04rd

This task became the most popular among the solved ones but at the same time raised many questions.

The idea of the task is a reference to data privacy and language models that can give away the private information on which they were trained.

According to the description of the task, the players got a model of autocompletion of words, extracted from the keyboard of one of the top managers of some company and the last words he typed. The players were asked to get the password, which would be a flag for getting points.

The task could be solved simply if, in the API to the model response, the players changed the top-n predictions that were displayed to the user (by default, one prediction was displayed). Starting with the phrase that was in the description, it would be possible to obtain several options and use them for the next prediction. Trying different things, at some point, the model would suggest a phrase supplemented with a flag.

>

Starting with `Somewhere in`, it was possible to get the supplementary options and, going through them, it would be possible to obtain the full phrase. Ideally, the necessary sentence was: `Somewhere in Moscow City hacker Bob broke the system with password`, and the flag would be added to it.

The flag was the phrase `pr1v473_d474_5h0uld_b3_pr1v473`, which should have been enclosed in the tag `AICTF {__}`.

To make the mechanics of the task clear, we decided that it would be cool if the phrase itself was clear: who did what and where. According to this scheme, it is clear where to go. However, we were not able to clearly reflect this scheme in the task description, and the players found some difficulties in solving it: for example, they received only part of a phrase or a phrase in a similar flag format. But still, the task turned out to be solvable for most of the players.

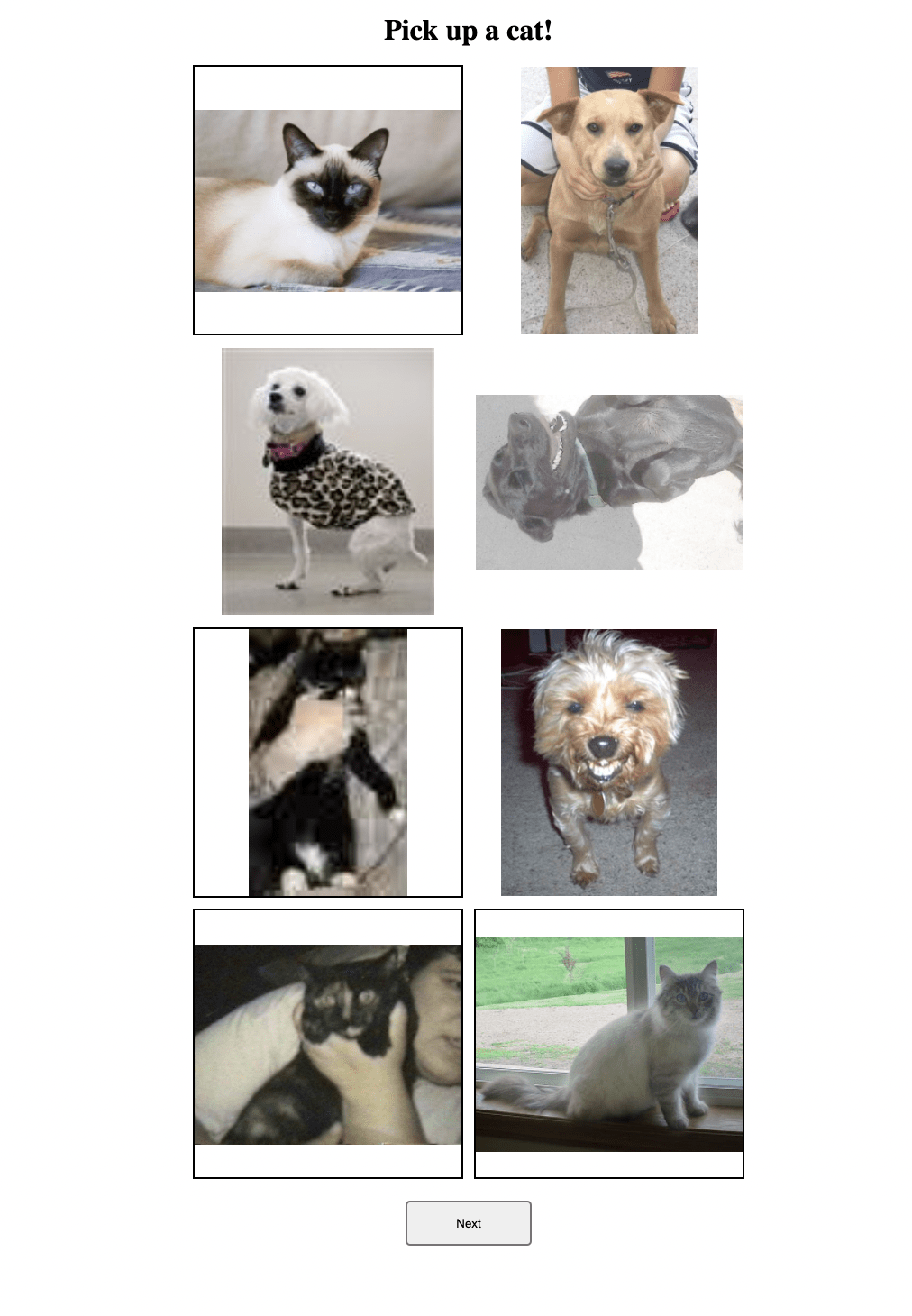

Game of Cats

In our opinion, this is a fun task for automation, but not everyone managed to complete it.

>

At the heart of the task is a game, and it is not known how many levels there are in it, and if you start playing manually, you will have to choose a photo at each level, which shows a cat, and click Next. At some point, instead of one image, you get two, three, and up to fifty. At the same time, there is a time limit, and the progress is reset when an error occurs.

We tried to adequately complicate the game so that it wasn't played manually, but that was what some desperate players did.

>

We took an open dataset, hoping that the players would find a trained model and simply automate the playthrough.

But in fact, there was another hack: they could see that the state of the game did not change, that is, even without using the model, they could just go through the options for each level, save the successful ones, and start again in case of an error.

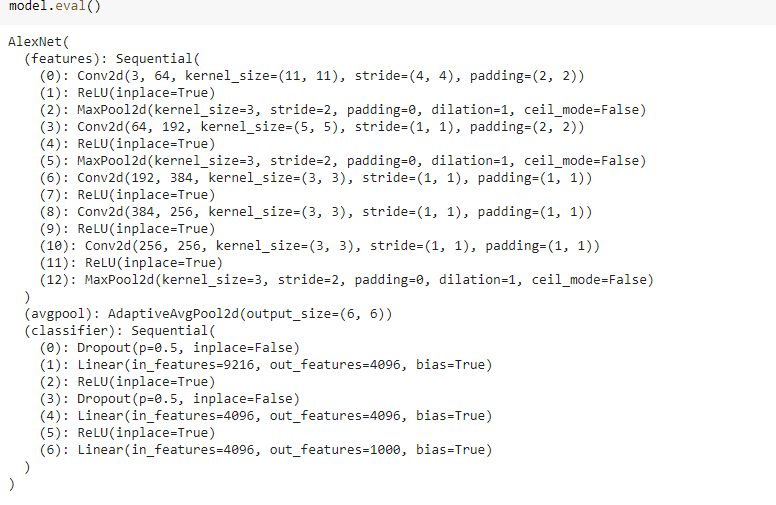

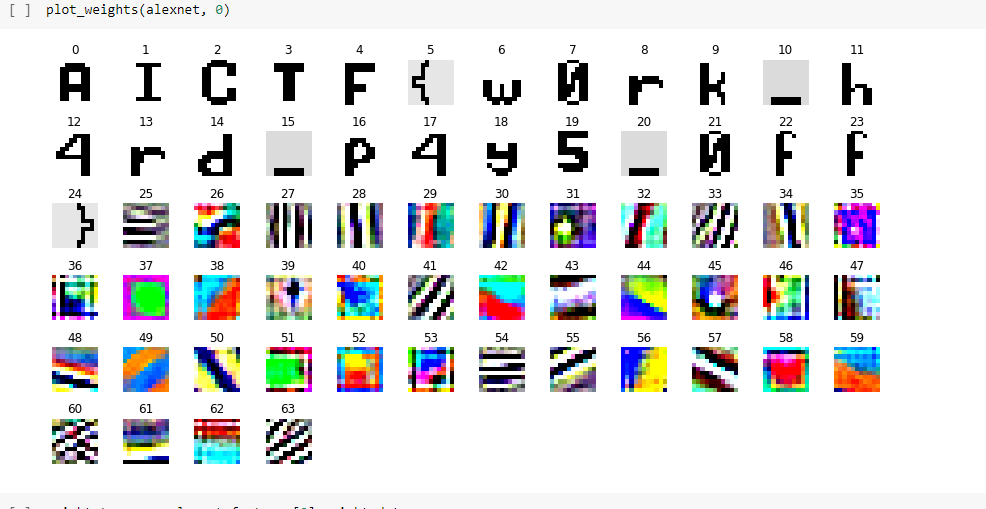

AlexNetv2.0

In my opinion, this task should have been the easiest, but we did a dynamic assessment—and we were not mistaken.

The players were given a model and told that it was some new version. The task was from a stegano category—a category of tasks aimed at extracting information.

Having taken a little look at the model, the players could see that the architecture was a regular AlexNet model:

>

Where is the flag hidden here? Having given it a little thought (and this is the essence of each task), the players can come to the conclusion that the layers in the convolutional network are also images and try to visualize them. There are a lot of functions for visualizing layers, they can be googled (analyticsvidhya.com/blog/2020/11/tutorial-how-to-visualize-feature-maps-directly-from-cnn-layers), and many work out of the box.

Without going far into the deep part of the network, the players could find the flag:

>

h4ck3r

We could not leave the classic CTF players without a task, so we thought about this task for a long time.

A service was given that accepted an image as input, but also returned an image with the applied filter and hacker score as output. And only a real hacker could get the maximum score!

What's the first thing a data scientist will think? It is necessary to craft images and get the maximum score—the adversarial attack! But it is difficult to craft images in the case of a black-box model. We tried to hint that this should not be done.

The task category was marked as web and stegano, so a real CTF player would start looking for a bug in the web! Having exploited Path Traversal, which was prudently embedded in the service, the players could obtain the service source code and see how this hacker score was given to the image.

And in addition, there was another interesting detail: the flag was mixed with the picture, but the number of flag characters was set depending on the resulting score.

Possible options:

>

On the one hand, the participants had to implement the reverse mixing algorithm to get the information from the image.

>

On the other hand, there was no way to obtain the whole flag, other than to send a few pictures to get the different parts and, subsequently combining them, get the correct full string.

We thank the RuCTF team, who allowed us to take their cool service as the basis for the game!

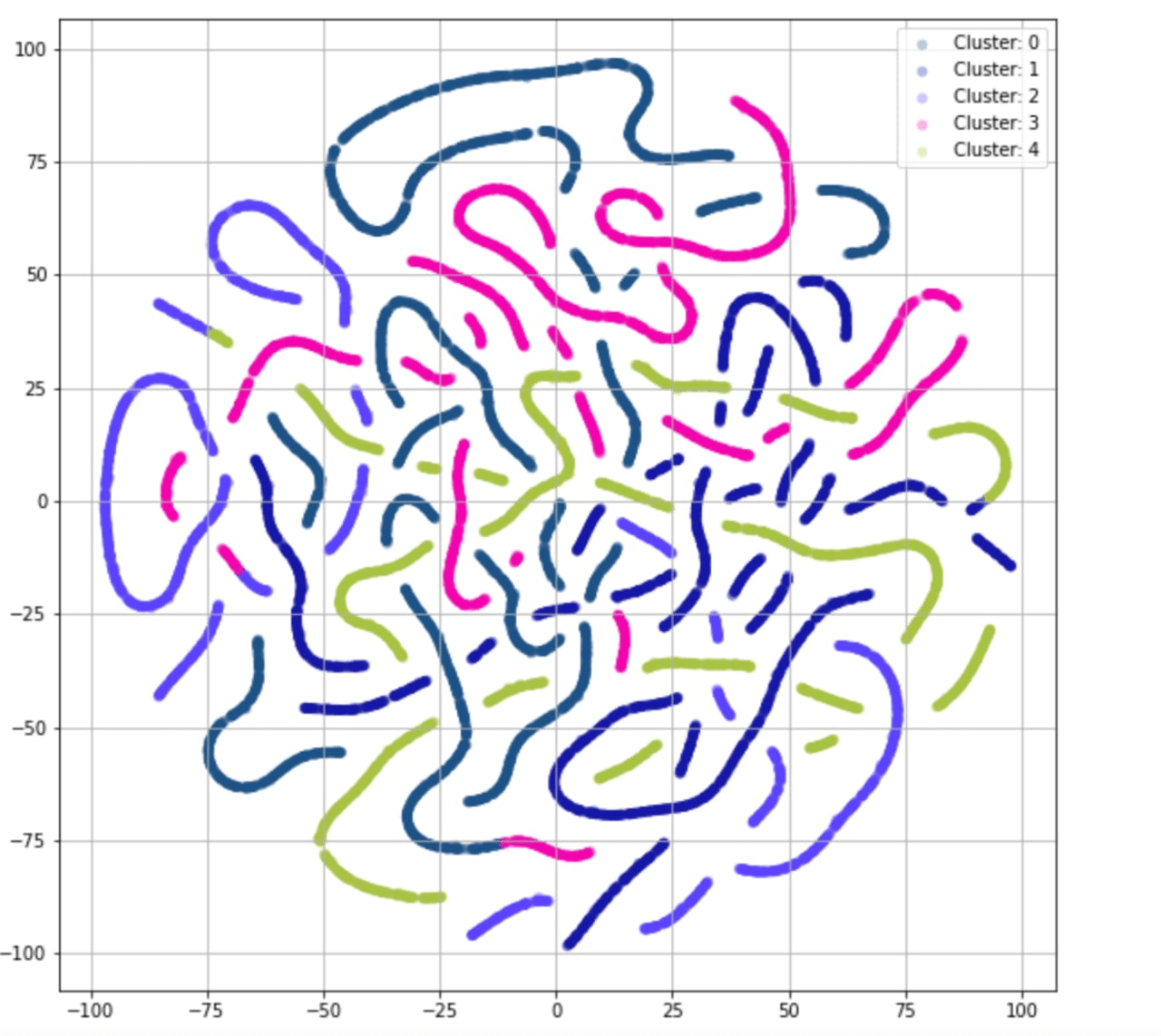

BigLittleData

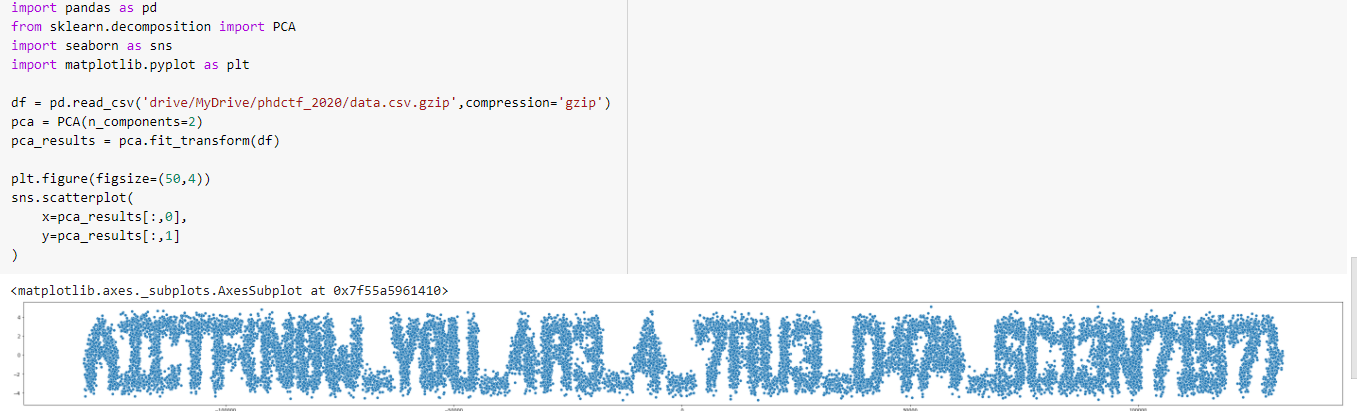

All that was given to the participants was a CSV file with a set of data. What to do?

I see the data—I open it in pandas!

It looked something like this:

>

And if you are already an experienced data scientist, then most likely you will want to visualize the data. Actually, choosing a visualization method was the right way to solve the task. But everyone understood the task differently, and sometimes very interesting visualization options came out. In our opinion, they could be almost called art.

>

We agree here that there were a lot of visualization options, but we hoped that the players would choose the most popular ones and just give it a try. This task was also solved, but it was not so easy.

The players could get a flag by tampering with the PCA algorithm.

>

Tensorch

In preparing this task, we were inspired by the existing CVEs in frameworks for deep learning. But to simplify the task, we decided not to use real frameworks with a huge code base, but to write small service of our own.

The players were given a binary file that read and ran the user's neural network. They had to find a vulnerability in it and remotely exploit it.

There were two (intentionally embedded) vulnerabilities in the binary file:

- The size of the user input was not checked.

- When selecting the activation function for the layer, the function index was checked incorrectly.

The first vulnerability allowed us to obtain the addresses of the stack and load the binary file itself in the memory, which in the future would help us to bypass ALSR. The second vulnerability made it possible to jump to an arbitrary address and start executing our own code.

How to exploit the first bug:

- The structure that stores the neural network lies on the stack. There are also coefficients for the layers.

- We will enter the values of our input in a special way and specify a fairly large size so that we get val_from_stack * 1.0 as the network output.

- Next, we translate the double sent to us into bytes and thus get the addresses.

How to exploit the second bug:

- We check the signed int for the fact that it is less than three, but if we enter -1, then the value from the buffer that stores the output of our network will be used as the address of the function.

- We create a new network with eight outputs and adjust the coefficients so that all the outputs are equal to the address we want to jump to.

- Then we create a new network with the activation function equal to -1.

Now we can jump to any address, but this is not enough. At the same time, there is one interesting place in the binary file:

text:00000000000019C1 mov edx, 240h ; nbytes .text:00000000000019C6 mov rsi, rax ; buf .text:00000000000019C9 mov edi, 0 ; fd .text:00000000000019CE call _read

At the time of calling the activation function, the RAX register contains the value that should be passed to the activation function:

.text:00000000000014AD movq xmm0, rax ; a .text:00000000000014B2 call rdx ; activation function call

Thus, given that in step 1 we learned the address of the stack, we can simply read our ROP chain into the stack. The address must be calculated in such a way that that we overwrite the output address of the _read function. After that, there is another small exercise to leak the address of the libc and read the second chain.

Results

This year we tried to make the tasks a little more familiar to data scientists and believe that it turned out quite fine. We had three tasks specific to data scientists, one task for automation, and two tasks more familiar to the classic cybersecurity specialists (although in the specified subject).

Within a little more than one day, 19 players managed to report at least one flag. All the tasks were solved, but no one solved everything. On the one hand, there was a risk that someone would solve everything and the game would become boring, but on the other hand, it seems that we managed to make the tasks quite solvable. But next time we will have to come up with a more complex task to definitely make the game a real challenge.

Prize-winning places were taken by:

- Жмыхолет Апач

- pomo_or_not_pomo

- konodyuk

We awarded the guys with prizes relevant to the competition: AWS DeepRacer for the first place, JetRacer AI racing robot for the second, and Jetson TX2 Module for the third place.

We hope that we help data scientists and cybersecurity experts to become acquainted with each other's professional world and enjoy the game!